In the last post I argued that buildings should not need interpreters. That a facility manager standing in front of fourteen browser tabs at six in the morning should be able to ask her building a question in plain English and get a real answer back, with real numbers, with the right standard cited, with a chart she can hand to her board. The promise was simple. The architecture behind it is not. This post is about what we built to make that promise honest.

I want to skip the part where I re state the problem. If you are still on the fence about whether a fragmented dashboard stack is a tax on the people who actually run buildings, go read part one. Here I want to walk through GrydAI as a system. What it is, what tools we used, and the high level shape of the architecture that lets the agent behave like the engineer your facility manager wishes she could afford to hire.

What GrydAI is

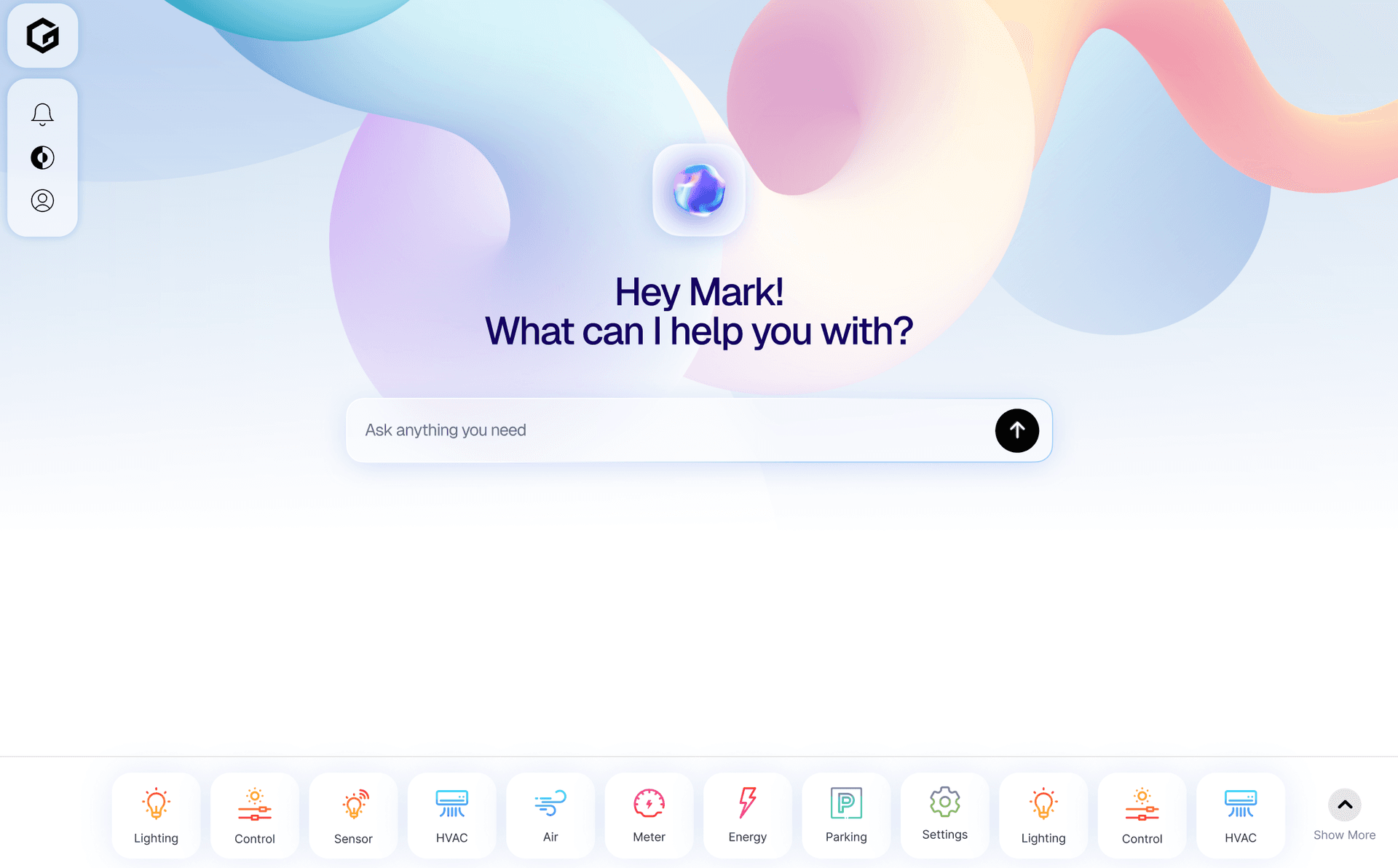

GrydAI is a domain specialized autonomous agent for facility managers, operations engineers, and sustainability leads. It lives behind a single chat surface. Underneath that surface, every reply is the result of a multi step loop that planned the work, fetched live data from your sensors, ran real Python on the numbers, cited the right edition of the right standard, and wrote a row to an audit log so finance can verify what happened later.

A short list of what it actually does, end to end.

It ingests live indoor air quality, energy, and occupancy streams. It runs analyses on top of those streams: weather normalized energy use intensity, peak demand profiles, anomaly detection, thermal comfort scores, after hours waste, scope two emissions. It generates branded reports in PDF, DOCX, PPTX, and XLSX, all visually consistent with the chat UI. It sends emails through Resend with the report attached. It schedules itself: the same audit that took three iterations to produce in chat can be set to run every quarter on a cron and arrive in someone's inbox without anyone touching the app. It monitors quietly through an ambient heartbeat that only speaks when it has something to say. And for buildings that run on Grydsense hardware, the agent is also wired to take real control actions, things like HVAC setpoint changes, lighting scenes, and schedule overrides, every one of which sits behind an approval gate and writes the before and after state to an audit log. That last surface is its own post; I am not going to elaborate on it here.

Three Principles That Shaped Every Other Decision

Before any of the technical choices matter, three rules drive everything else. They sound like values. They are actually constraints, and they show up in the stack in places you would not expect.

No estimated numbers.Every number in a GrydAI answer comes from a sensor row, a meter row, or a Python computation over those rows. The agent does not approximate, and we do not let the language model do arithmetic on data the agent could have measured. If a regression's R squared comes back below half, the agent reports the unnormalized number and explains why normalization would not be reliable. The reason a real Python sandbox is in the stack at all is this rule.

Standards are floors, not ceilings. The agent's domain literacy is part of the architecture, not an afterthought. ASHRAE 55, 62.1, 90.1, LEED v4.1, WELL v2, WHO Air Quality Guidelines 2021, India CPCB NAAQS, and ECBC live in versioned tables tagged by edition. The agent has been taught the gaps explicitly. WHO PM2.5 sits at five micrograms per cubic meter, India NAAQS at forty. The agent reports both, names the gap, and lets the operator decide. ASHRAE 62.1 does not have an absolute CO2 limit, has not had one in nearly thirty years, and the agent will correct that misconception every single time someone asks.

Mutations are diffs.Every change to physical state writes a row to an audit log with the before, the after, the agent's reasoning, and the user who approved the action. Setpoints, lighting scenes, schedules, alarm acknowledgements. Nothing physical happens silently. Email is intentionally ungated by product decision so the agent can deliver reports without a click for click confirmation, but every send is still audit logged. The agent has read access to its own log via a tool, which means it can recall what it did to your building last Tuesday before it does the thing again.

The Stack at a Glance

We made a deliberate choice to keep this on a small, opinionated set of platforms so we spend our time building the agent and not running infrastructure.

The application is Next.js on Vercel, App Router, Fluid Compute. The agent layer is the Vercel AI SDK 7 beta and its ToolLoopAgent, which gives us a streaming loop with first class hooks for context shaping, tool gating, and observability. The reasoning models we use are hosted on Groq Cloud, currently GPT OSS 120B as the primary, with GPT OSS 20B and Qwen 3 32B as supported alternates. The data plane is Supabase across the board: Postgres for sensor torrents, pgvector for retrieval over your own corpus of manuals and specs, Realtime Broadcast for live sensor pushes to dashboards, Storage for generated artifacts, and Auth with row level security for tenancy. Supermemory holds the user soul side of memory: preferences, anchors, prior analysis recall. Web search is Exa primary, Tavily fallback. Email is Resend. Cron is Vercel Cron, a single platform, five entries. Code execution is E2B, with a custom Firecracker microVM image we maintain. Document generation rides on the Anthropic pdf, xlsx, docx, and pptx skills mounted into the sandbox, composed with our own brand aware Python helpers. UI artifacts render through shadcn/ui charts, which is Recharts under the hood, and Tremor for KPI cards and dashboard primitives. Observability flows through Langfuse via the SDK's OpenTelemetry hooks. The brand voice carries Geist Sans, JetBrains Mono, and Tiny5 from the chat header through the PDF export, with a single typography hierarchy used in both places.

That is the stack. None of it is exotic. The interesting part is how it composes.

The Agent Loop

Every chat turn runs a loop with up to two hundred iterations and a continuation checkpoint at seven hundred and twenty seconds, just under the eight hundred second function ceiling on Vercel Pro. Two hundred is a backstop, not a target. Most answers converge in three to twelve iterations. The cap matters for the long jobs, like a quarterly audit, where a real answer can legitimately fan out into thirty or forty data fetches before it lands.

Each iteration has the same shape. A prepareStep hook runs before the model is called and gets to reshape the conversation: structurally prune old reasoning blocks, gate the tool registry by phase (read only first, full set after), inject a soft warning if the agent has been pounding the same tool too many times. The model is then invoked with a streaming text call against the active tool registry. Tools execute, sometimes in parallel, sometimes serially behind an approval gate. Oversized tool results are persisted to a private Supabase Storage bucket with a manifest and a fifty row inline preview; the agent gets back a handle and can page through the full payload via a read tool when it actually needs the rest. An onStepFinish callback writes a row to the agent run scratchpad, which is just a Postgres table indexed for fast replay.

The thing the loop owes the user, from the very first byte of the response, is transparency. As the model is reasoning, the route streams typed events onto the wire: step lifecycle markers, thinking phase tags, sandbox stdout and stderr, in flight chart frames, microcompaction notices. The chat UI renders them as transient pills that fade and as persistent typed artifacts that survive a page reload. Charts, tables, KPI cards, building strips, downloadable file links, compliance grids, alert configurations. They are not server rendered HTML; they are typed data parts that the client hydrates into React components. That is the same schema the agent uses to author the artifact, which means there is one source of truth for what a chart looks like, whether it lands in chat or in a PDF.

The reason the streaming feels worth the engineering is trust. A facility manager watching the agent narrate "running the energy query on Unity Bank Mumbai for Q3" trusts the eventual fourteen page report more than she would trust the same report arriving silently five minutes later. The transparency is not a feature; it is a trust mechanism.

Memory, in Three Stores

Memory is split across three stores, each picked because it does one thing better than the alternatives.

Supermemory holds the soul. Per user container tags scope every memory; we mint scoped keys per workspace with rate limits and expiry so even a leaked key cannot read another tenant's data. This is where "the user prefers Fahrenheit" lives, and "this user runs quarterly audits on Unity Bank," and the kind of fact a chief engineer would jot down in a notebook rather than write a SQL query for.

Supabase pgvector holds the corpus. Building manuals, equipment specs, standards editions, incident reports, commissioning documents. Halfvec at fifteen hundred and thirty six dimensions to halve storage with negligible recall loss, an HNSW index, a tsvector keyword column, and a hybrid Reciprocal Rank Fusion function that blends the two retrievals. Because it is Postgres, every retrieval is row level security aware: a user only sees documents tied to buildings they have access to.

Supabase Postgres itself holds the substrate. Sensor torrents, monthly partitioned with BRIN indexes for ordered time series. Audit logs, agent runs, scheduled jobs, alert configurations, generated artifacts. Realtime sensor pushes flow over Supabase Realtime Broadcast on per tenant channels, not over Postgres Changes, because Postgres Changes is single threaded and would crumple under a real sensor stream.

Sitting on top of those three stores is a four tier compression strategy that runs between iterations and decides what to do as context climbs. Per turn budgets push oversized tool results to Storage. A structural prune strips old reasoning blocks while preserving tool call IDs. A pre compaction memory flush extracts durable facts to Supermemory and durable building insights to a vector indexed table before any summarization runs, because once you compress a six tool result session into a nine section summary, the fine grained facts are gone forever. Then a fast reasoning model produces a structured nine section summary and the message array resets. The tiers are independent, each cheaper than the next, each preserving more than a flat truncate would. The agent emits a compaction marker into the chat so the user sees it happen, and the audit log records the event.

Real Code on Real Data

This is the piece that changes what the agent can actually do.

Every chat session has a dedicated E2B sandbox. A Firecracker microVM with about a hundred and fifty millisecond cold start, two virtual CPUs, four gigabytes of RAM, and a thirty minute idle timeout that pauses rather than kills. Pause and resume preserves kernel state. A pandas dataframe loaded in turn one is still resident in turn seven, which means the agent can run a multi step analysis without paying the cost of re reading a hundred thousand rows of meter data on every iteration.

The sandbox runs a custom image we maintain called grydai-toolkit. It bundles five things on top of the stock E2B code interpreter. A Python package of building management helpers exposing query, styles, and artifacts modules. The brand fonts as TTF files. A gryd.fonts Python module that registers them with reportlab, python-docx, and python-pptx so a generated PDF uses the same H1 as the chat UI. The Python and Node libraries the four Anthropic document skills depend on. And the Anthropic skills themselves mounted read only at /skills, with their bundled scripts available from inside the sandbox.

The agent talks to the sandbox through one tool, analyze_in_sandbox, with a description that explains what is preinstalled, that kernel state persists across calls, when to use it instead of the simpler aggregation helpers, and how to surface charts and files. The tool runs the code, streams stdout and stderr back into the chat as transient parts so the user watches the work happen, picks up matplotlib pngs and decides whether to inline them as base64 or upload to Storage based on size, and pauses the sandbox after each call so we are not billed for user read time.

What this gives us, concretely, is the ability to do the math. A weather normalized EUI is a regression on heating and cooling degree days plus a weekend dummy plus occupancy. A peak demand is a ninety fifth percentile of fifteen minute kW. A power factor analysis is a calculation across voltage and current vectors. An anomaly investigation runs a sklearn IsolationForest. A psychrometric chart renders through CoolProp. A thermal comfort score uses pythermalcomfort to compute PMV and PPD per zone. We run all of it in real Python, on real meter data, and we surface the result in the chat as a chart artifact and in the PDF as a bound table. The agent's number is not an estimate. It is the answer.

The security boundary on the sandbox is layered. Network egress is restricted to Supabase, Resend, Groq, OpenAI for embeddings, Exa, plus the package registries needed for one time bootstrap. Filesystem isolation comes from Firecracker. The sandbox never gets the Supabase service role key; it gets a JWT signed for one user and one building, valid for thirty minutes, scoped through app metadata. The microVM is the safety boundary, not a sanitizer.

Skills, Standards, and Brand

The agent has a library of playbooks called skills, each a markdown file with YAML frontmatter, lazy loaded on demand. Energy audit, comfort analysis, IAQ deep dive, anomaly investigation, occupancy optimization, predictive maintenance, compliance check, incident response, quarterly report. Plus the four Anthropic document skills the domain skills compose with for output generation.

A skill is a tested, opinionated procedure. The energy audit skill, for example, has six phases. Gather data. Calculate EUI. Benchmark. Drill down. Recommend. Generate the report. Each phase tells the agent what tools to call, what thresholds to cite to which standard edition, what to do if data is missing (ask the user, do not estimate), and what validation gates to apply. The skill is the corrective for the language model's natural pull toward summarizing and merging. It is a script with explicit gates, written by an engineer, that the agent loads when the user asks something that matches.

Standards live as data, not as code. ASHRAE editions are tagged. The grid emission factors are tagged. India 2024 sits at 0.727 kilograms of CO2 per kilowatt hour, sourced from CEA Database v20.0; when the next CEA edition publishes, we update the row and every Scope 2 calculation that runs after that point uses the new factor. The agent's number always carries the version of the factor it used.

Brand consistency is part of the skill contract. Every PDF, DOCX, PPTX, and XLSX a skill produces uses the gryd.styles helpers to register the brand fonts and apply the same nineteen role typography hierarchy that the chat UI uses. The H1 in a generated audit report is the same Geist Sans at seventy two pixels with negative four percent tracking that you see in the chat header. The chip on a numeric dashboard tile and the chip in a PDF table use the same JetBrains Mono at twelve pixels with eighteen percent letterspacing. A user who downloads a PDF from a chat should never feel like it came from a different product.

Cron, Ambient Monitoring, and a Brief Note on Controls

Time is the dimension that makes the agent ambient instead of reactive. Five Vercel Cron entries cover every recurring path. A one minute dispatcher that runs user scheduled tasks whose next run time is due. A five minute alert evaluator that checks active alert conditions against the latest sensor window, respects cooldowns, and dispatches notifications. A nightly aggregation. A weekly digest. A fifteen minute heartbeat that fires the agent against a per user checklist and is wrapped in a suppression layer so it stays quiet when nothing is wrong.

The agent itself can call a schedule_task tool. Which means a facility manager can finish her audit, ask the agent to run the same thing every quarter on the first of January, April, July, and October at nine in the morning Asia Kolkata, and that intent becomes a row in the scheduled_jobs table. Three months later the cron fires, an isolated agent run boots with a cheap context, the audit runs, the PDF lands in the right inbox, and the audit log records the action. Nobody touched the app.

For buildings on Grydsense hardware, the same approval gated mutation pattern extends to physical control actions. HVAC setpoints, lighting scenes, schedule overrides, alarm acknowledgements. Every one writes a before and after to the audit log. We are not going to elaborate further here; that surface deserves its own post.

A Walk Through, End to End

Picture the morning the agent earns its keep. The facility manager opens GrydAI and writes a single sentence. Run a quarterly energy audit on Unity Bank Mumbai for Q3, weather normalized, with peer benchmarking, and email the PDF to the operations alias when it is done.

The agent loads the energy audit skill. It fans out four parallel data fetches against Supabase: energy readings, IAQ readings, occupancy readings, building metadata. The energy result is large; it gets persisted to the overflow bucket with a preview, and the agent reads the rest from the bucket via a paged tool. It boots the sandbox; pandas reads the meter CSV directly from a sixty second signed URL, no round trip through the application server. It calculates raw EUI in both kilowatt hours per square meter per year and kBtu per square foot per year. It pulls heating and cooling degree days from NASA POWER through web fetch, runs the regression in the sandbox, gets an R squared of 0.78, and reports the weather normalized number. The kernel state survives across the next four iterations, which is why the dataframe loaded in iteration three is still in memory in iteration eight when the agent calculates after hours waste, peak load factor, and power factor.

It searches the indexed corpus for ASHRAE 90.1 and ECBC references, builds six recommendations with dollar and tCO2e estimates against the versioned grid factor, and writes a fourteen page reportlab PDF and a three sheet openpyxl XLSX, both styled through gryd.styles. It uploads them to Storage, gets signed URLs, and calls send_email with both attachments. The email lands at operations at unitybank dot com. The agent emits a final answer with the executive summary, the report URL, and a link artifact that survives a page reload.

She types one more thing. Schedule this every quarter. The agent calls schedule_task, the user approves, the row lands in scheduled_jobs, the audit log records it. Three months later the cron fires the same skill on a clean isolated session and the next report arrives in her inbox without her touching the app. The next time she opens the chat, Supermemory recalls that she runs quarterly audits on this building, that she prefers operative temperature framing, and that she sends deliverables to the operations alias. She types compare Q4 to Q3 and the agent already knows what she means.

What We Actually Built

GrydAI is a small set of architectural decisions that happen to compose into something useful. A streaming agent loop with serious context discipline. A sandbox per session that lets the language model do real math instead of guessing. A skill library that turns engineering procedures into markdown the model can read. A memory split that respects the difference between a fact about a person and a fact about a building. A typed artifact system that lets the chat UI render the same data structures the PDF prints. A single platform cron with five entries that turns the agent into something that monitors a building when no one is watching. An audit log that writes a row every time anything changes. And an extension surface where adding a new sensor type, a new email provider, a new web search backend, or a new building controls vendor is one file and one line in a registry.

None of that, on its own, is novel. The combination is what gives a facility manager an agent she can trust at six in the morning when she has three hours and a board call to prepare for. Not because the model got smarter. Because the infrastructure around the model finally lets it behave like an engineer.

In part one I argued that buildings should not need interpreters. The architecture is what makes that promise honest. The next surface is opening the same pattern up to vendors and to the controls layer for buildings that run on Grydsense, so the agent does not just understand your building, it operates it. More on that soon.